We Need to Talk About Agents

What GM's $40B mistake can teach Vertical AI founders

The debate about AI and automation often swings between two extremes: excited claims that AI will replace all knowledge workers by the end of the decade, and anxious concerns that enterprise AI pilots are faltering and failing to provide tangible economic value. Both views miss the mark.

It’s becoming increasingly clear that the picture-perfect “AI replacing workers” story doesn’t add up. Evidence linking AI to hiring slowdowns has been muddled at best, while productivity claims have been hard to measure (some have even been disproved by available data). The “AI-Washing” of job cuts — executives using AI automation to try to flip the negative connotations of layoffs into a positive shareholder narrative — has become pervasive.

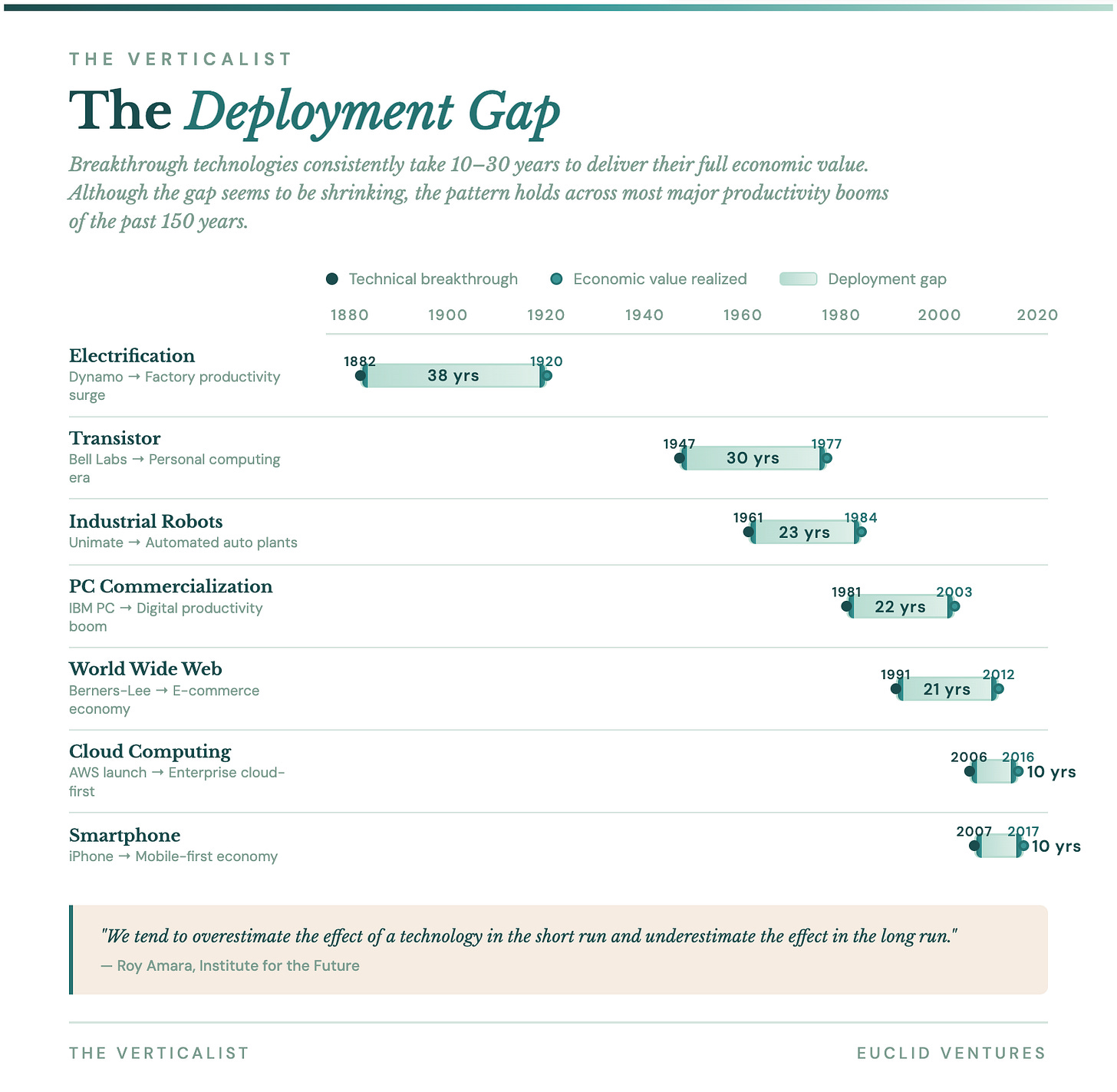

None of this means that LLM-era AI isn’t fundamentally transformational, nor that its economic potential is capped. We’re just in the early innings of adoption. It is easy to forget that there was a 20-year gap between the commercialization of the personal computer (by IBM in 1981) and the peak of the digital productivity boom at the turn of the century.1

The single-mindedness of the current AI automation narrative is easy enough to explain: incentives, incentives, incentives. The loudest voices on AI’s labor impact have had the most to gain from speaking up — from the execs running frontier labs to CEOs right-sizing their workforces in the post-2021 era to VCs stirring up hype to justify ever-larger AUMs.

But it’s becoming a problem.

The Church of Agenticism

Hyperbolic AI narratives have now graduated from high-level economic pronouncements to product positioning itself. Every AI-powered feature is now an "agent." Conference panels want to discuss "managing your AI workforce." Every enterprise buyer is being told they need "digital co-workers." Founders are giving their agents names, personalities, and even human faces. Some startups are gauging candidates by how “agentic” their mindsets are.

The term agent — an inherently vague word meaning “one who acts” — is starting to collapse under the weight of its own ubiquity. While its rise was fueled, at least in part, by the desire to make AI more accessible and urgent for enterprises, it may be having the opposite effect. When everything becomes an “agent,” it's nearly impossible for buyers to tell what any given product actually does, how it fits into existing operations, how they should interact with it, or why it matters to their bottom line. In Vertical AI specifically, this is disastrous.

While there are verticals in which exploratory or performative AI adoption is real2, most industry buyers don’t care about AI — they care about results. Good Vertical AI is a capability infrastructure: a technological foundation that expands what organizations can accomplish, well beyond what is possible through any combination of humans and traditional software. Buyers and stakeholders need a clear way to understand the function of Vertical AI: its potential to unlock new business opportunities and strengthen industry-specific workflows. By focusing on form with metaphors like “agent”, by contrast, we risk obscuring the real opportunity, alienating the buyers who will drive adoption.

Agentic systems — AI that can accomplish complex, multi-step workflows in pursuit of goals — represent a genuine and meaningful capability shift. It is the desperate desire of all innovators to coin new “categories” that capture mindshare and warm audiences to their product, and for good reason. Metaphors are the heart of product marketing: key cognitive tools that shape how buyers evaluate, how organizations adopt, how new markets develop, and even how products themselves evolve.

We oppose Agenticism because it peddles a risky myth: that buyers won’t need to alter their business practices to enjoy the great benefits of AI. This has happened before.

GM Builds the “Factory of the Future”

On October 20, 1984, The New York Times published an article titled, “GM Factory of the Future Will Run with Robots.” Roger Smith, then GM’s CEO, argued that automation would help the company compete with increasingly formidable Japanese competitors. Smith bet his tenure on automation: GM would invest over $40-45B in factory robots in the mid-80s. It wasn’t GM’s first shot at owning the narrative of the future, but it would be their most expensive by a long shot.

Smith’s premise was simple: replace costly, unionized labor with tireless machines, keep the workflow and assembly line the same, and profit from the resulting productivity gains. Better, cheaper, faster! What actually happened, however, is a cautionary tale about the difference between adopting and buying technology. GM placed robots exactly where human workers had been. Job roles stayed the same. The pace and sequence of the assembly line were unchanged. The robots acted as obedient replacements for labor, integrated into a system designed around human limitations.

Unfortunately, GM’s implementation was widely panned as a disaster. Smith’s robotic factories struggled to match the productivity of their human-run forebears. Robots sometimes painted each other instead of cars or welded their doors shut. Manufacturing costs increased.

Toyota, working with similar robotic technologies, asked a fundamentally different question: what becomes possible when a new capability is introduced into the system? That shift in perspective changed everything. Plant layouts were redesigned. Work cells were reorganized. Quality feedback was made more immediate. Human workers moved from task execution to overseeing the system. The factory transformed into a coordinated human-machine environment, not just a human workflow with machines attached.

Both companies had access to the same tools. Only one reconsidered the architecture around them. Ultimately, GM’s implementation fell short because it focused on swapping people for machines rather than on infrastructure to enhance capabilities. The long-term divergence in productivity and competitive advantage developed from that initial framing choice.

Stanford economist Paul David documented the same pattern a century earlier in his landmark study of factory electrification. In the late 1800s, factories ran on steam—a single massive engine turning an overhead drive shaft that powered every machine in the building through an intricate web of belts and pulleys. The entire factory layout was dictated by proximity to that shaft. When electric motors first became available, factory owners did the obvious thing: they ripped out the steam engine and bolted an electric motor in its place. The shaft kept turning. The belts kept running. Productivity barely moved.

It took thirty years before a new generation of manufacturers realized that electricity’s real advantage was distributed power—a small, independent motor on every machine, freeing the factory floor from the tyranny of the shaft. Suddenly, layouts could follow the logic of production rather than the logic of power transmission. Single-story factories replaced multi-story ones. Assembly lines became possible. Workers gained autonomy over their own machines. The productivity gains were transformative, but they required abandoning the architecture that steam had imposed.

The “agent” discourse repeats the same automation mistakes for the modern era. When AI is cast as a co-worker, the unit of adoption becomes the role, not the workflow or the system in which that workflow is embedded. Executives begin asking HR questions, and the functional value derived is simply cost. What’s the defensibility of selling cheaper work? Perhaps the next widget seller will undercut your pricing even further.

Funny enough, this wasn’t even GM’s first time making this mistake around technology. In 1919, the company entered the tractor business with a machine called the Samson Iron Horse, which was steered with leather reins so farmers would feel more comfortable than with a steering wheel. It flopped immediately, and GM exited agriculture by 1922, having learned nothing it would remember when the robots arrived sixty years later.

The Co-Worker Problem

The co-worker analogy seems harmless and helpful because it makes complex technology feel familiar. An entrepreneur can sell a vision of augmenting or even replacing specific staff members. It’s simple to understand and, hypothetically, easier to sell. It’s also straightforward to pitch the vision to investors and stakeholders: a large amount of money is spent on labor performing a specific task(s) that we can automate. Easy to explain, easy to grasp.

Perhaps unsurprisingly, the “factory of the future” powered by robots promised by the GM CEO was praised at the time and elevated him to the status of the press darling. He became a champion of U.S. manufacturing, catching the public's imagination and becoming a media hero.

Described as an "innovator," "visionary," and "21st century futurist," Smith was named Automotive Industries’ Man of the Year and Advertising Age's Ad Man of the Year, honored with the Financial World Gold Medal (best CEO in America), and designated by The Gallagher Report as one of the ten best executives in America. With such acclimation, it seems little wonder that "GM completed the 1980s in a state of arrogance.”3

It turns out that selling the replacement of labor with robots (or AI) to the press and investors was the only successful part of the GM initiative. The reason the “co-worker” metaphor is flawed is that it draws attention to staffing rather than to system design. It produces local gains (e.g., less headcount or individual employee productivity) while leaving the underlying structure untouched. Now consider how this plays in vertical markets—construction, healthcare, logistics, legal, food services—the industries where AI has the most transformative potential and where the “agent” framing does the most damage.

These buyers don’t wake up wanting an agent. They face a scheduling problem, a compliance bottleneck, and an intake workflow that’s losing money. Their procurement process relies on PDFs and phone calls. The customers most likely to benefit from Vertical AI are often the least tech-savvy, the most skeptical of hype, and the most allergic to buzzwords. Calling your product an “agent” shifts the focus from the problem to the technology. It’s the same category of mistake founders make when they lead with their product, or worse, their tech stack, rather than the customer’s pain.

Unsurprisingly, the mistake with GM extended beyond the metaphor; the entire automation process was flawed. It exhibited a poor understanding of the assembly line and the workflows that comprised it. It viewed labor as fungible units to be replaced, while assembly plants are especially complex environments. As the old adage goes, there are “few ways to lose money faster than automating a process you don’t understand.”

GM failed not because its robots were bad, but because it treated them as direct replacements for people. Leveraging automation necessitates understanding both the intended and actual “work to be done.” Successfully deploying Vertical AI requires a similar insight: you must understand the work so you can re-architect it to maximize its benefits from AI. Otherwise, you risk creating tools that sound great in a pitch deck but are ultimately misaligned with the actual process being automated — and incapable of realizing the transformative potential of AI.

Capabilities, Not Agents

The “agent” metaphor replicates this misalignment on a large scale. It presents AI as a replacement for labor, when the true opportunity—especially in vertical markets—is to broaden what a business can achieve. Consider the two categories of work in which Vertical AI is best positioned to operate. The first is administrative: scheduling, recording, invoicing, intake—back-office work that is time-consuming, inefficient, and likely not done well. The second, and far more interesting category, is the work not done: bids not submitted, calls not answered after hours, patients not seen, inspections not performed. This is the biggest unknown and the most compelling opportunity. It does not show up in a headcount reduction model. It shows up in revenue growth.

The distinction matters enormously for how we size these markets and how we sell into them. Much of the narrative around AI’s value has centered on direct labor replacement, given the immediate profit potential for buyers. But we believe AI’s greater impact—and the larger market opportunity—will be its ability to enhance workforce productivity rather than simply substitute for it. To bring this into focus, consider the hidden revenue lost in “work not done.” For instance, in industries like construction or legal services, missing out on even 10% of potential bids or after-hours service requests can translate into billions in unrealized revenue. If AI-enabled systems could capture just a fraction of these missed opportunities, the value unlocked could dwarf the savings from labor-reduction models. Making the “work not done” visible gives buyers a concrete, numbers-driven reason to rethink how they adopt technology.

This is the Toyota playbook, applied to vertical markets: when a new capability enters the system, the system should reorganize to exploit it. Freeing up administrative work may require less headcount in the back office, but tackling the work that remains should have the opposite effect—enabling businesses to take on more projects, more customers, and more patients, and to grow their revenue. More business will likely require more headcount, not less. In a steady state, the more productive firms will combine enhanced labor productivity with AI to grow and outperform.

When you frame AI as an "agent"—a digital worker—you implicitly promise substitution. When you frame it as a capability, you promise expansion. The second framing is more accurate, more attractive to buyers, and more aligned with how technology has actually transformed industries throughout history.

A concise comparison crystallizes the difference:

The richest upside comes from treating AI not only as a path to speeding up the status quo, but also as infrastructure to unlock new ways of doing the work altogether.

The "Moving Boundary” Between AI & Humans

There is a subtler strategic point here that the agent discourse obscures entirely. As AI capabilities improve, human roles won’t disappear; they migrate. The customer support representative is pulled into the boundary region where a customer is distressed but not saying why, where empathy requires overriding policy. The urban planner is freed from traffic modeling to mediate between incompatible visions of what the city should be. The construction project manager stops manually tracking submittals and starts managing more complex, higher-margin jobs. Even in AI services, the human transitions from rote execution to the paradigm of action that models learn from at the edge.

In vertical markets, this migration is the entire value proposition. The pitch is not “we’ll replace your people.” The pitch is “your people will be able to do more of the work that actually matters—and more of it.” That is a very different sale, and it requires a very different story than “agents replacing humans.”

The Vertical AI companies gaining real traction understand this intuitively. Abridge doesn’t sell health systems as an “agent.” Instead, they redesigned the documentation workflow so that a patient conversation automatically becomes a structured clinical note inside the EHR. The physician’s role migrates from hours of after-hours charting to reviewing and signing off on AI-drafted notes. Clinicians using Abridge report 86% less effort on documentation and 60% less after-hours work. That’s a much more powerful value prop (and business case) than selling a note-taking agent.

In legal, EvenUp has built a claims intelligence platform for personal injury firms that automates demand drafting, medical chronology creation, and case analysis across the entire lifecycle of a claim. Firms using EvenUp report that their drafting output has tripled and that average settlement timelines have been cut by a month, all without adding headcount. The attorney’s role shifts from documentation to higher-value cases within the firm.

In construction, BuildVision helps manufacturers, distributors, and suppliers automate the manual back-and-forth of the selling process—ingesting and normalizing PDFs, product specs, and unstructured data that previously lived in email threads and phone calls. The sales rep doesn’t get replaced; they get freed from the drudgery that kept them from responding to more prospective jobs, and even taking on higher-margin, more complex jobs. None of these companies leads with “agent.” They lead with the workflow, and the results follow.

GM’s CEO lacked this strategic vision. Plant closures and layoffs characterized his leadership. Replacing humans with robots was the end goal. He famously told auto workers: “Every time you ask for another dollar in wages, a thousand more robots start looking practical.”

Toyota’s engineers, on the other hand, understood that once new capabilities enter a system, the system either adapts to exploit them or resists and diminishes them. The business process (and eventually the organization) becomes a moving boundary between what the technology can reliably handle and what humans must still provide. Leading such an organization requires attention to the evolution of that boundary, not a static model of which “digital workers” occupy which roles.

Similarly, agents or, in Toyota’s case, robotics automation, alter the economic value of human capabilities. When an agent reliably handles a task, the economic importance of the skill required to perform it decreases, while the value of other human capabilities increases. This dynamic appears similar to augmentation but is fundamentally different. Augmentation relies on the idea that the worker remains central to the workflow, viewing AI as an enhancement of their capabilities. As this essay explains:

As agentic capabilities improve and force the structure of the work to reorganize around them, without proactive human-agent systems design, workers no longer define the workflow; they occupy the segments where agentic capabilities fail, get bottlenecked, or hit their limits. The effect may look like augmentation from a distance, yet the underlying logic is different: the system expands outward through agentic execution, and humans are continually reallocated to the frontier where reliability breaks and human capabilities like judgment, interpretation, and governance create distinct value.

Words Matter More Than You Think

Our anti-Agenticism is more than a semantic quibble. AI has a public perception problem today. Only 13% of respondents in a recent survey believe artificial intelligence will do more good than harm. The “stop hiring humans” billboards, the flippant commentary from tech CEOs about mass job elimination, the casual framing of AI as a replacement workforce—all of it feeds resistance in exactly the markets where AI can have the most impact.

The industries with the worst productivity—construction, healthcare, transportation—are also the ones with the strongest cultural attachment to human labor and the deepest skepticism of technology. These sectors account for 37% of total hours worked in the US economy but contribute only 24% of output. Their productivity CAGR has been below 0.5% since 2005. Construction has actually gotten less productive. There is no credible path to restoring historical productivity growth without a digital transformation of these markets. And there is no path to digital transformation if the people running these businesses think AI is coming for their livelihoods.

The “machines versus people” framing is simply bad business. This dynamic has caused labor tensions, slowed down technology adoption, and harmed organizational performance for over a century. The answer to disruption is not restraining innovation. But it is also not flippant to discuss job losses or to sell your technology as a human replacement. Founders should sell the broader promise of Vertical AI: technology that boosts productivity and drives growth, not just cuts costs.

GM CEO Roger Smith was a highly intelligent executive with good intentions who failed because he misunderstood the leverage of automation. For Smith, robotics represented the Holy Grail, the ultimate solution that could fix all of GM's problems at once. Unfortunately, he was wrong. By the time Smith retired in 1990, GM had shifted from the lowest-cost producer in Detroit to its highest-cost producer, largely because of the push to acquire technology that never paid off. Toyota, on the other hand, prospered. By 1990, they had surpassed GM in profit per vehicle by a factor of three, despite producing fewer cars. By 2008, it overtook GM as the world’s largest automaker, a position GM had held for 77 consecutive years.

The companies that succeed in Vertical AI will be those that, like Toyota, understand the moving parts of the system, not those selling a shinier robot for the same assembly line. If you’re a founder working in vertical markets, the practical and immediate implication is to drop the word “agent” from your pitch deck, landing page, and sales calls. Focus on the workflow you’re redesigning, the capacity you’re unlocking, and the outcome you’re delivering.

If we could leave you with one thought, it’s this:

Toyota never sold a robot — they just shipped a better car.

Thanks for reading The Verticalist!

Euclid is a VC backing Vertical AI founders from inception. If anyone in your network is working on a new startup in this space, we’d love to help. LinkedIn is the best place to reach us.

Benzell, Brynjolfsson, Rock (2020). Understanding and Addressing the Modern Productivity Paradox. MIT Work of the Future Task Force.

Big Law, perhaps?

Choudary (2026). Stop Managing Your AI ‘Workforce’, Start Allocating AI Capabilities. The Kyndryl Institute.

Finkelstein (2003). Case Study: GM and the Great Automation Solution. Tuck School of Business, Dartmouth College.

Kieffer, Repenning (2025). 2 MIT Professors Offer a Case Study to Consider for AI Adoption: GM Versus Toyota in the 1980s. Fortune.

Strong take. I’ve seen this firsthand, calling everything an ‘agent’ doesn’t make it usable inside an organization. The real challenge isn’t the interface, it’s the underlying data, ownership, and workflows. Until those are aligned, agents don’t scale, they stall.

Good read. Memo to myself: https://glasp.co/kei/p/07b21a9c6e9f53263db8